The Concept of Estimation

start by identifying what you’re uncertain about

Reducing uncertainty is a question of first clearly identifying what you’re uncertain about: decomposition. Break down the question, classify, compare, discriminate, distinguish, and so on, until you can reason about the components independently. Then ask why the thing matters. What are the possible outcomes? What decisions would be affected by additional information?

For example, maybe one of the things you’re uncertain about in this supposedly RESTful API is error handling. It matters because if some errors are reported as a 200 response with an embedded error message, then you have a lot more work to do, than if it consistently returns 400 responses for errors. Instead of “adding a couple of days, in case…” you widen the range until you feel confident that the actual effort will fall somewhere in that range, regardless of how this question plays out.

Range estimation doesn’t have to take any more time; you’re just expressing things differently. If range estimation results in a greater comprehension of uncertainty, and you spend more time on estimation to reduce that uncertainty, then we’ll take that as a good thing.

There is an art in lighting a fire. We have the liberal arts and we have the useful arts. This is one of the useful arts.

— James Joyce — Ulysses

If actual uncertainty results in a range that is too wide for comfort, then you figure out what questions, if you had the answers, would reduce your uncertainty.

Uncertainty is reduced by obtaining new information through observations about those questions. When observations are expressed quantitatively, that constitutes a measurement. Thus, a measurement is a quantitatively expressed reduction of uncertainty based on observations.

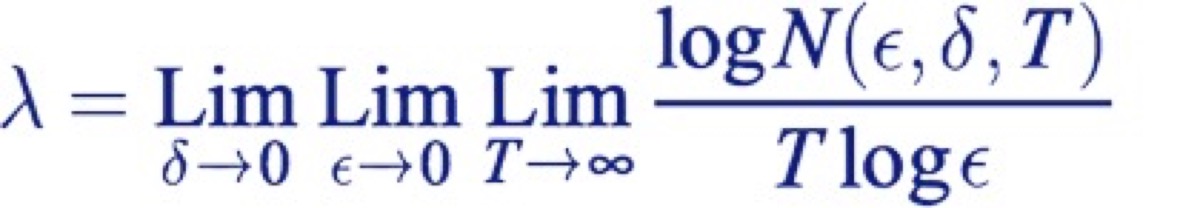

Here’s a more precise what of saying the same thing from a 1948 paper called “A Mathematical Theory of Communication” by a Bell Labs researcher named Claude Shannon.

Shannon postulated that information is the amount of uncertainty reduction in a signal. Shannon’s conception of information as uncertainty reduction was fundamental to the emergence of information theory, the advent of signal processing, the development of integrated circuits, the gzip compression algorithm, MPEG, mp3 and an array of other innovations that still play big in the questions such as those that brought us together here today.

Thinking of information as uncertainty reduction opens us up to a world of measurement where uncertain values are first-class citizens; where we can more easily take into consideration intangibles which we once dismissed as not subject to precise measurement. Shannon referred to the reduction of uncertainty as the removal of entropy from the thing being measured, relative to its prior state. An iterative process of uncertainty reduction increases the fidelity of the signal.

Let's agree to define productivity in terms of throughput. We can debate the meaning of productivity in terms of additional measurements of the business value of delivered work, but as Eliyahu Goldratt pointed out in his critique of the Balanced Scorecard, there is a virtue in simplicity. Throughput doesn’t answer all our questions about business value, but it is a sufficient metric for the context of evaluating the relationship of practices with productivity.